Defendant Compelled by Court to Produce Metadata – eDiscovery Case Law

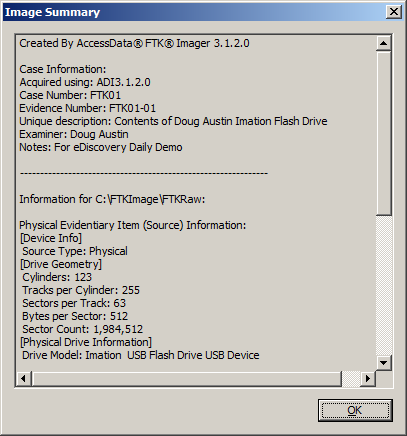

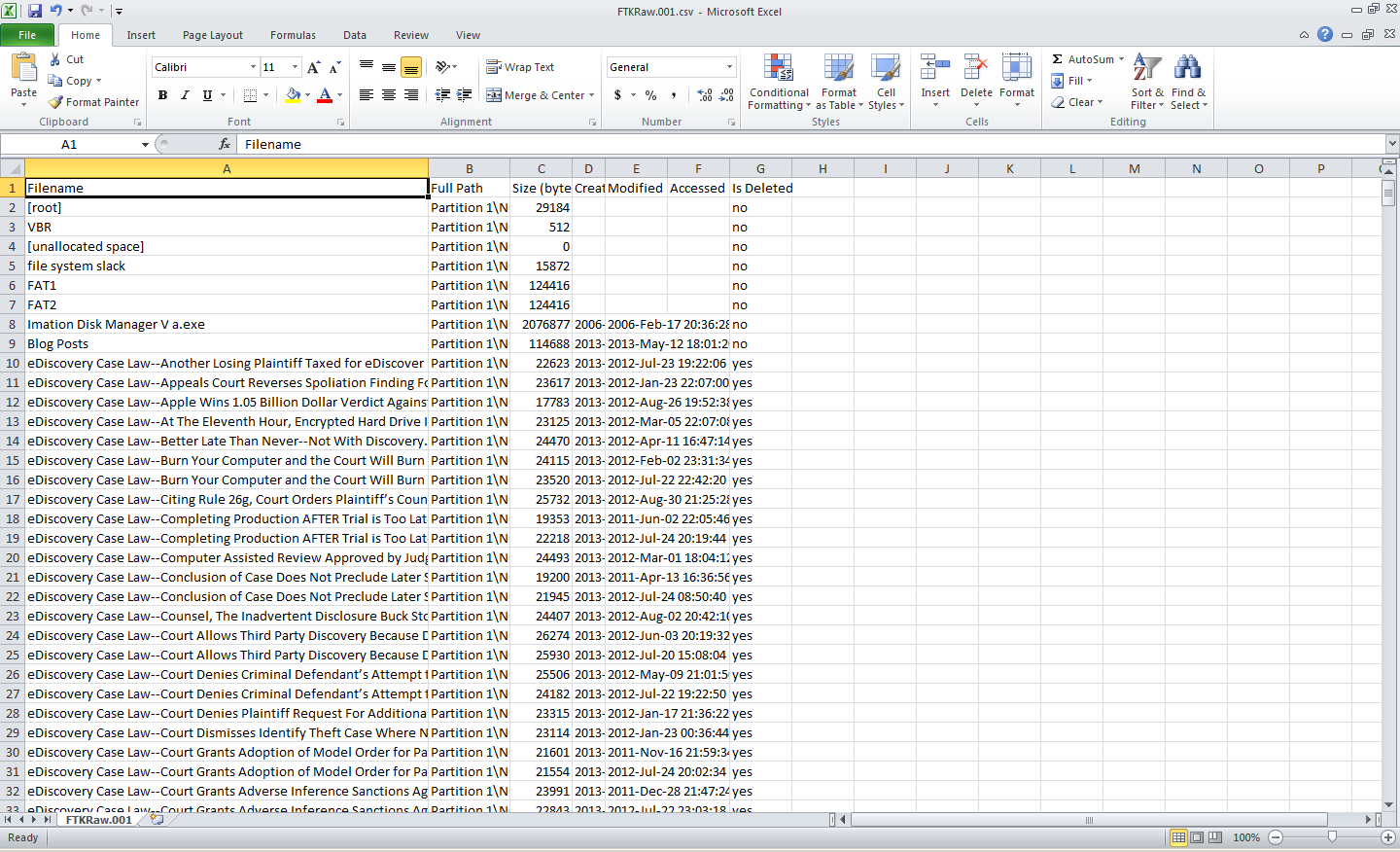

Remember when we talked about the issue of metadata spoliation resulting from “drag and drop” to collect files? Here’s a case where it appears that method may have been used, resulting in a judgment against the producing party.

In AtHome Care, Inc. v. The Evangelical Lutheran Good Samaritan Society, No. 1:12-cv-053-BLW (D. ID. Apr. 30, 2013), Idaho District Judge B. Lynn Winmill granted the plaintiff’s motion to compel documents, ordering the defendant to identify and produce metadata for the documents in this case.

In this pilot project contract dispute between two health care organizations, the plaintiff filed a motion to compel after failing to resolve some of the discovery disputes with the defendant “through meet and confers and informal mediation with the Court’s staff”. One of the disputes was related to the omission of metadata in the defendant’s production.

Judge Winmill stated that “Although metadata is not addressed directly in the Federal Rules of Civil Procedure, it is subject to the same general rules of discovery…That means the discovery of metadata is also subject to the balancing test of Rule 26(b)(2)(C), which requires courts to weigh the probative value of proposed discovery against its potential burden.” {emphasis added}

“Courts typically order the production of metadata when it is sought in the initial document request and the producing party has not yet produced the documents in any form”, Judge Winmill continued, but noted that “there is no dispute that Good Samaritan essentially agreed to produce metadata, and would have produced the requested metadata but for an inadvertent change to the creation date on certain documents.”

The plaintiff claimed that the system metadata was relevant because its claims focused on the unauthorized use and misappropriation of its proprietary information and whether the defendant used the plaintiff’s proprietary information to create their own materials and model, contending “that the system metadata can answer the question of who received what information when and when documents were created”. The defendant argued that the plaintiff “exaggerates the strength of its trade secret claim”.

Weighing the value against the burden of producing the metadata, Judge Winmill ruled that “The requested metadata ‘appears reasonably calculated to lead to the discovery of admissible evidence.’ Fed.R. Civ.P. 26(b)(1). Thus, it is discoverable.” {emphasis added}

“The only question, then, is whether the burden of producing the metadata outweighs the benefit…As an initial matter, the Court must acknowledge that Good Samaritan created the problem by inadvertently changing the creation date on the documents. The Court does not find any degree of bad faith on the part of Good Samaritan — accidents happen — but this fact does weight in favor of requiring Good Samaritan to bear the burden of production…Moreover, the Court does not find the burden all that great.”

Therefore, the plaintiff’s motion to compel production of the metadata was granted.

So, what do you think? Should a party be required to produce metadata? Please share any comments you might have or if you’d like to know more about a particular topic.

Disclaimer: The views represented herein are exclusively the views of the author, and do not necessarily represent the views held by CloudNine Discovery. eDiscoveryDaily is made available by CloudNine Discovery solely for educational purposes to provide general information about general eDiscovery principles and not to provide specific legal advice applicable to any particular circumstance. eDiscoveryDaily should not be used as a substitute for competent legal advice from a lawyer you have retained and who has agreed to represent you.